Join us in Cannes for our Institutional Conference this Spring

Building An Institutional-Grade Oracle: Know Your Source Means Know Your Risk

The oracle problem has always been blockchain’s Achilles heel. For all the cryptographic guarantees and decentralized consensus mechanisms securing on-chain execution, most smart contracts ultimately depend on off-chain data delivered through black boxes. When a DeFi protocol liquidates a position or settles a perpetual contract, the price that triggers that action travels through layers of aggregators, node operators, and networks, each adding opacity and creating potential points of failure.

Kaiko’s Oracle represents an opportunity to fundamentally rethink this architecture. Rather than retrofitting transparency onto existing solutions, we built something different from the ground up, a licensed oracle system where every data point can be traced directly back to its source, where methodologies are documented and auditable, and where institutional compliance isn’t an afterthought, but a foundational design principle.

The source transparency problem

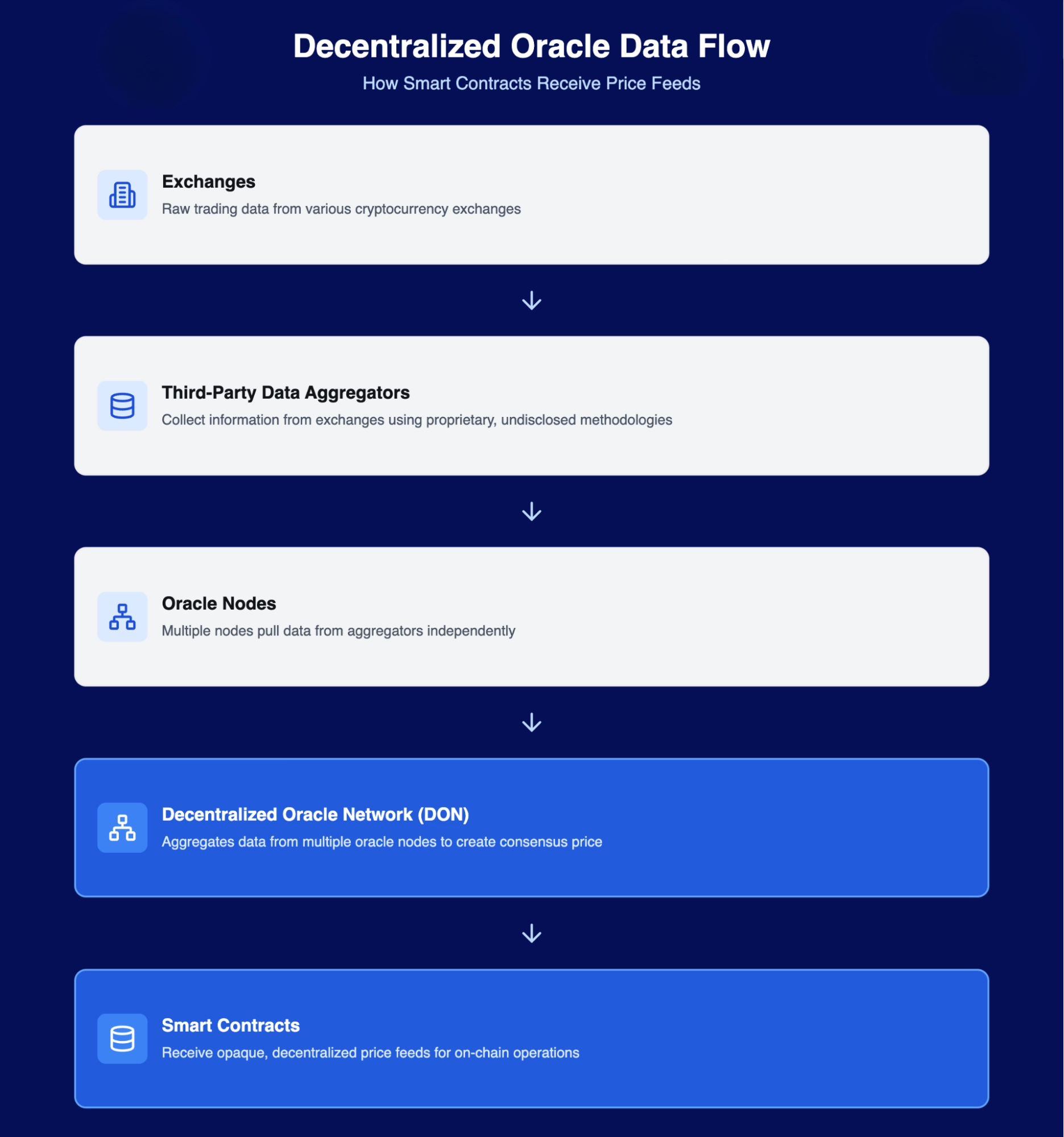

To understand why this matters, we need to examine how most oracle networks actually work. Take the dominant oracle model deployed across DeFi. Smart contracts receive price feeds from Decentralized Oracle Networks (DONs), which aggregate data from multiple oracle nodes. Each node, in turn, pulls data from third-party data aggregators. Those aggregators then collect information from exchanges using proprietary, undisclosed methodologies.

While you can verify which oracle nodes participated in a given feed on-chain, the data aggregators each node streams from remain completely opaque.

This multi-layered opacity creates unreplicable data. You cannot independently reconstruct the price that triggered your liquidation. You cannot verify which exchanges contributed to that calculation, and you cannot audit the methodology used to normalize outliers or weight venues. You’re trusting intermediaries who are themselves trusting other intermediaries.

The cost of black box aggregations

Oracle manipulation and failures have extracted billions in value from DeFi protocols, let’s take a look at a few examples:

- Synthetix’s sKRW Incident where an off-chain component failure caused the Korean Won to be reported at 1000x its actual value. An arbitrage bot immediately exploited this for over $1 billion in profit before the error was caught.

- Compound’s Finance DAI/USDC market on Coinbase Pro was manipulated by 30% in 2020, while Compound’s oracle was dependent on that single exchange’s API it triggered approximately $90 million in wrongful liquidations.

- Another incident happened with Mango Markets in October 2022 where Attackers manipulated the MNGO token price through coordinated trading, exploiting the oracle’s reliance on thin liquidity to drain $117 million from the protocol.

- In 2025 data quality issues enabled attackers to drain $128 million from Balancer pools. The vulnerability stemmed from oracle pricing that didn’t accurately reflect true market pricing, allowing arbitrageurs to exploit the discrepancy between on-chain reported prices and actual exchange rates.

protocols targeted by oracle exploits

Across these incidents, two critical problems expose fundamental flaws in traditional oracle architectures. Inaccurate oracle pricing creates cascading liquidation events across entire protocols. While the protocol executes flawlessly, oracle pricing fails to reflect actual market conditions, enabling attackers to drain millions.

Equally problematic is the absence of accountability. When anonymous oracle nodes provide faulty data causing millions in losses, there is no legal entity to pursue, no dispute resolution process, and no regulatory recourse.

When you don’t know where your price came from, you can’t verify it was legitimate until after the damage is done. Traditional financial data providers offer contracts and legal frameworks for such failures. Since institutional participants operating under fiduciary duties cannot accept infrastructure where no one is responsible when things go wrong, these systems are not compatible.

direct source architechture

Market data is not free or public domain. Exchanges, data providers, and benchmark administrators invest heavily in infrastructure, quality assurance, and regulatory compliance. This creates valuable IP that must be protected and properly licensed, for instance, Bloomberg doesn’t allow unlimited redistribution of its terminal data, and Refinitiv enforces strict licensing for its feeds.

Kaiko maintains licensed redistribution agreements with over 100 centralized and decentralized exchanges, each with specific terms governing how that data can be used and by whom. There are no intermediary data aggregators applying proprietary methods. When you request KK_RFR_BTCUSD through the Canton Oracle, that rate is calculated directly from exchange trade data, using documented methodologies, from venues you can identify.

Since every data point includes unique identifiers from the source exchange, this allows for “precise reconstruction of any calculation at any point in time.” If a rate triggered a liquidation, you can trace it back to the specific trades on specific exchanges that contributed to that calculation.

Kaiko operates as an EU BMR-compliant Regulated Benchmark Administrator, adhering to IOSCO principles for financial benchmarks. This regulatory framework mandates transparent, auditable rate methodologies, not akin to proprietary black boxes. It requires documented exchange selection criteria, clear attribution of data sources, and regular independent audits.

This compliance framework is particularly critical for traditional asset classes such as equities, foreign exchange, and commodities, where institutional investors and regulators have long-established expectations around data quality and vendor accountability. For tokenized products holding these assets, or operating on institutional platforms like Canton focused on RWA tokenization, using a compliant data provider ensures that on-chain valuations meet the same rigorous standards as their off-chain counterparts.

The entire flow is recorded on Canton’s distributed ledger, creating an immutable audit trail that traditional oracle networks struggle to provide. Every request has a corresponding response. Every response has a documented cost. Every rate delivered can be independently verified against Kaiko’s reference data infrastructure, and traced back through Kaiko’s regulated infrastructure to underlying exchange sources.

This is auditability at every layer. Institutional risk committees can verify what data was requested, what was delivered, what it cost, and most critically, where it came from. Kaiko’s oracle eliminates the aggregator black box layer entirely.

why this architecture wins

Multi-chain architectures become increasingly challenging to scale because each price feed on each chain requires a dedicated decentralized oracle network. This creates exponential complexity as every new asset multiplied by every new chain multiplies operational overhead. Node operator diversity requirements compound across each deployment, and when data aggregators remain opaque, verification bottlenecks multiply with every new feed.

A single connection to Kaiko provides access to over 100 exchanges. The data arrives already normalized, standardized, and quality-assured at the source while eliminating the need for extra applications to replicate these processes. When Kaiko adds new exchanges to its infrastructure, all Canton participants benefit immediately. Perhaps most importantly, regulatory compliance isn’t bolted on after the fact, it’s part of the foundation.

The request-response model Kaiko uses adds additional advantages that free oracle models struggle to provide. By requiring payment for data requests, oracles can naturally resist spam and create sustainable economics for high-quality data infrastructure.

The institutional adoption thesis

This architecture matters because the next wave of on-chain adoption won’t come from protocols willing to accept “good enough” data quality. It will come from institutional participants whose risk committees demand auditability, whose regulators require documented data provenance, and whose compliance teams need to defend every input to every calculation.

When a traditional financial institution launches tokenized securities or perpetual markets, their external auditors will ask a simple question.

Can you prove where this price came from?

With Kaiko’s Oracle, the answer is “yes, with exchange level attribution, documented methodologies, and regulatory oversight”. When a corporate treasury like Tharimmune builds applications on the network, they need pricing infrastructure that meets institutional standards. Not “decentralized enough” but demonstrably compliant.

The recent launch of equity and commodity perpetuals on Markets by Kinetiq also illustrates this opportunity. Traditional assets trading 24/7, such as perpetuals, require continuous reference pricing even when underlying markets are closed.

The perpification of everything

As digital asset markets mature, we’re seeing a structural shift. Perpetual contracts are expanding from crypto native assets to stocks, commodities, indices, and eventually any tradeable instrument. But this “perpification of everything” only works at scale if the underlying pricing infrastructure can meet institutional standards. You cannot build a liquid, globally accessible perpetual market if your price feed relies on unnamed data aggregators using undocumented methodologies.

Canton’s architecture, combined with Kaiko’s regulated benchmark rates, makes this feasible. It provides 24/7 pricing for traditional financial assets even when underlying markets are closed, with auditable data lineage from exchange to settlement and regulatory compliance built into the infrastructure layer. The result is perpetual markets that institutional participants can actually use, because they can verify the data, audit the methodology, and demonstrate compliance to regulators.

Traditional oracle networks are optimized for decentralization and censorship resistance. Those are valuable properties. But they’re not sufficient for regulated financial products. This is why we built not just an oracle, but an institutional-grade pricing infrastructure.

Learn more about our oracle solutions

MORE FROM KAIKO

![]()

Company

New York

Kaiko and Ownera Announce SuperApp Collaboration to Deliver Market Data Across the Ownera Ecosystem

Kaiko Data Services SuperApp enables banks, asset managers, and custodians to access institutional-grade market data natively across the Ownera SuperApps Platform.

05/03/2026

Read More![]()

Perspectives

New York

Building An Institutional-Grade Oracle: Know Your Source Means Know Your Risk

Most smart contracts rely on opaque off-chain data feeds. Learn how oracle architecture works, where it fails, and how transparent, auditable design is key.

05/03/2026

Read More![]()

Indices

New York

How Kaiko is expanding in traditional markets with oracle-led growth

As the adoption of distributed ledger technology (DLT) accerates, Kaiko’s request-response oracle launches with both Kaiko Reference Rates and third-party data: a clear signal that institutional-grade infrastructure is now arriving on-chain.

04/03/2026

Read More